At the end of the season it seems as though there is often disappointment from a surprisingly large amount of managers in failing to achieve a rank inside the top 10k- especially when so many prominent accounts have histories with this achievement repeated.

Human intuition would indicate that a manager who reaches the top 10k three times a row must be applying a near perfect strategy and the fact that users are able to achieve the target on a repeated basis would suggest that top 10k is an achievable goal if you are good enough.

However while intuition offers a lot of value it can struggle with interpreting a clean signal from something as chance based as FPL with several millions playing year on year. This post aims to estimate the probability for different groups of managers to reach this target in a straightforward way.

Repeatability Investigation

To investigate this is not overly convoluted or hard to interpret. The question really is, “year on year, how many managers achieve top 10k repeatedly”. If the same managers are dominating year on year we can make an inference on the level of reliance on skill/variance to achieve this target.

Fortunately all users FPL histories are public and I was able to detect 9,123 of the 2019/2020 season top 10k, who signed up for 2020/2021 and also competed in 2018/2019. Of those managers 594 achieved top 10k in 2018/19- suggesting ~6.5% can repeat the trick year on year.

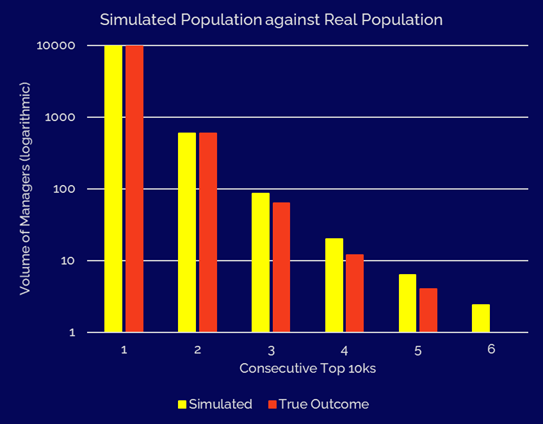

Of those 594 managers, 560 competed in 2017/2018 and 63 achieved a top 10k rank. If we go all the way back to 2014/2015- one manager has achieved Top 10k 6 times in a row between 2014/2015 and 2019/2020- well done to the surprisingly unknown Yavuz Kabuk!

This is visualised below- we can see the results in some ways match expectations- the more times a manager repeats this feat, the more we can expect them to do so in future (ie. they are likely to be more skilled).

However the really valuable feature we are looking at here is the repeatability rate (see the secondary X-axis and yellow plotted lines). It would appear a manager who achieved top 10k in the past 4+ seasons still only has a ~30% chance of achieving the rank in the following season. Of course in reality manager skill may vary a bit year on year (most likely in an upward direction or a plateau) which adds an extra layer of complexity.

This provides some references for what estimated models of the world should look like. For example we can quickly rule out that the 1,000 best managers in the world have a 50% chance with some simple maths as described in the next section.

What Does this Mean

The true probability that a manager has of reaching this goal in a given season is unknowable, however based on past FPL history we can build a model that provides an estimated probability, we can also use EV modelling to provide a more detailed read of a manager as done with the Season Review tool.

For this article there is no need to go those exhaustive lengths- for now we can create a boundary of what a ‘reasonable world’ would look like that would align with the outcomes we’re seeing- after all it is the brush stroke of expectation setting that matters here rather than a specific figure.

For this article let’s reduce the population to five groups:

- ~100 geniuses

- ~1,000 top managers

- ~10,000 very strong managers

- ~100,000 good managers

- ~1M active casual managers

- ~6M semi interested managers

Off the bat we can rule out that the 100 geniuses and 1,000 top managers have a 50% chance of making the top 10k. If they did we would expect ~137 of them to achieve it three times in a row (way beyond what was measured) and that would be neglecting the fact that the ~7 million managers outside of this group can also achieve this.

So there we have a starting boundary- for the best one thousand or so managers in the world (not the estimated top 1,000- the actual unknowable top 1,000) the typical manager will not expect finish inside the top 10k.

A vaguely acceptable estimated population might give the top 100 a 50% chance, the next 1,000 a 30% chance, the 10,000 a 15% chance, the 100,000 a 5% chance, the next 1M a 0.1% chance and the remaining 6M with a 0.03% chance. This is a fast way of recreating a population that outputs more or less the same distribution of outcome- as shown below,

While we can get caught up in the detail of the true skill distribution across the 7M managers- it’s not really of interest. What is of interest however is that the data suggests that one of the top 1,000 managers in the world typically has less than a 50% chance of finishing top 10k- this should send a strong signal of what a reasonable target is.

In reality manager strategic skill (or focus) may not be consistent year to year- but generally repeats quite well. For that reason we might want to consider the estimated probabilities assigned to top managers a lower bound of what is achievable based on ‘live’ manager skill- or in other words, this might be a slightly cautious take.

Lets get Complicated (Inferrences from EV Modelling)

With the hypothesis of what a world like this might look like, let’s see how the world of EV models from fplreview.com match up.

Last season the world top 200k managers went through detailed analysis through a backend version of the Season Review tool. These 200k managers provide the basis of this analysis.

These 200k managers were evaluated based on Massive Data EV as well as Market EV and xG data. For the sake of using the cleanest data lets focus solely on Massive Data EV.

- 58% of the top 100 managers based on the Massive Data EV metric finished in the top 10k.

- 46.7% of the next 1,000 achieved top 10k.

- 23.6% of the next 10,000 achieved top 10k.

I would suggest these success rates at the top levels can be considered slightly optimistic as the scan only covered the final top 200k ranked managers. There may have been a small volume managers with great EV who got seriously unlucky to the degree that they would impact these figures.

Also as the metric used true gametime rather than predicted gametime (something that is on the way for the Season Review tool now that the HiveMind exists)- it’s likely that managers who gained from variance built from unexpected gametime events have higher rated EV in the same way it would their FPL pts.

Given that the success estimates for top managers from past FPL rank repeatability can be speculated as slightly pessimistic for top managers it is useful that we can have a slightly optimistic read- this creates a likely a boundary that we can suggest the true values lie between.

Converging these two ‘worlds’ we may estimate the following as a rule of thumb:

- The Top 100 skilled managers have a ~55% chance

- The next 1000 a ~35% chance

- The next 10,000 ~17.5% chance

It’s also worth noting that as the volume users increases and the quality of availability information improves these probabilities will continue to drop.

Past Histories and False Positives

Many of us are now familiar with the idea of false positives and true positive due to Covid-19 testing and all the media coverage that went with it. Similar ideas can be used to try and determine how likely a manager is to actually be employing a genius level strategy based on their past history and our estimated probabilities assigned across the groups of users.

If we believe that the top 100 have a 55% chance of this finishing in the top 10k, we would then expect only ~16 of the ~63 managers who achieved this three times in a row to be genius and ~75% of the managers found to be anywhere on the range very good to active. Indeed if we use the populations defined above a three in a row manager is about as likely to be a top 100k manager as a top 100 manager- that may seem somewhat surprising to many.

Once we go to 6 in a row however we can be quite confident that someone like Yavuz Kabuk is indeed likely to be one of the genius managers.

The Argument for Percentiles

Top 10k was certainly a more attainable goal and a fair target a decade ago, much less people played the game and the availability of good information was nowhere near the level it is now. Many of the managers with enviable histories that people want to emulate benefitted from that time.

Using percentile ratings would go a decent distance to clarifying past results- surely finishing top 10k when the game ~1M users is less meaningful than finishing top 10k now.

Conclusion

Dealing with an incredible sample of ~7M managers in a largely chance given game, the statistics reveal a more nuanced story than our intuition- and a frustrating one for people who want to find ultimate meaning in outcome over the 38GWs.

A manager achieving 3 top 10ks in a row is more likely to be employing an excellent strategy than a user who has achieved worse ranks of course- but the likelihood that we can tell that a user has a genius strategy or just a good/ok strategy is about the equal based on that history. It’s also possible a manager can change path and improve their process- the signal of FPL points/rank is simply too slow to detect this. EV data as in the Season Review tool however does hold a capability to produce faster and more informative feedback.

If we want a reference point to the current FPL world the points below are a good rule of thumb:

- The Top 100 skilled managers have a ~55% chance

- The next 1000 a ~35% chance

- The next 10,000 ~17.5% chance

When setting a target it’s worth thinking which skill level you believe you are a part of or are aiming to be a part of- for some this may indeed be the Top 100 or Top 1000. For the majority of very active users it is going to be Top 10,000 or Top 100,000.

If you take anything away from this, I would suggest giving up on the top 10k as a reasonable target unless you are approaching FPL almost as if it were a job. Top 1% is more realistic for even extremely good managers, anything beyond that is a great result and whatever the case chance will have the last word.